Small Language Models (SLMs) Simplified: What You Need to Know, Plus This Month's Med AI Gems 💎

Updates on Artificial Intelligence in Medicine 🤖💊

“People are so busy marveling (or panicking) about the future of AI in healthcare that they’re overlooking its real superpower today: making the boring, broken stuff actually work. AI has tremendous potential to fix fundamental problems this industry has kicked down the road for decades. It’s taking on everyday speed bumps that delay care and frustrate everyone, like prior authorizations that take seven days when they should take seven minutes.”

Colin Banas, M.D., M.H.A., Chief Medical Officer at DrFirst

Welcome to The ‘Med AI’ Capsule Newsletter—your go-to source for exploring how AI is transforming medicine! Whether you're a medical professional 👩⚕️, a tech enthusiast 💻, or simply curious 🧠, The 'Med AI' Capsule is for you! Stay ahead of the curve with the latest trends, insights, and updates in the rapidly evolving world of AI in medicine. 🚀

In today’s capsule:

5 QnA Primer

4 Research Picks

3 Learning Resources

2 Worth-Attending Events

1 Industry Spotlight, and more..

Time to Read: Around 8-10 minutes.

💬 5 QnA Primer

The concept for today is Small Language Models (SLMs).

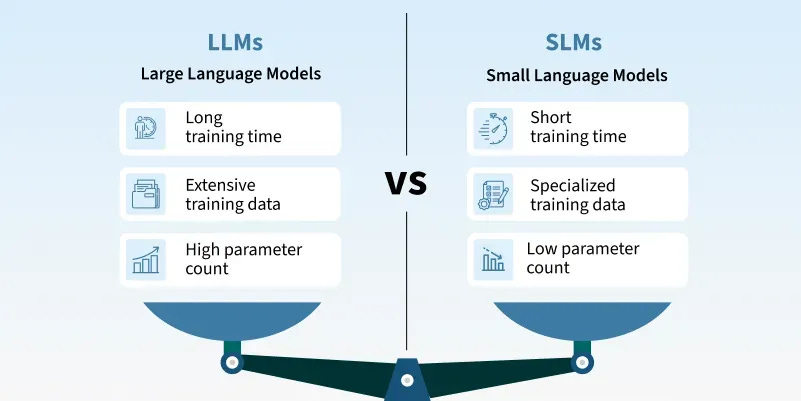

Q1. What are Small Language Models (SLMs)?

Small Language Models (SLMs) are compact AI language models designed to perform language tasks efficiently. They are usually much smaller than Large Language Models (LLMs), requiring far less computing power, memory, and energy. This allows them to run on local devices such as hospital systems, smartphones, or wearables. Rather than replacing LLMs, SLMs are designed for faster and more efficient performance within specific tasks or workflows.

Q2: Why is healthcare interested in SLMs?

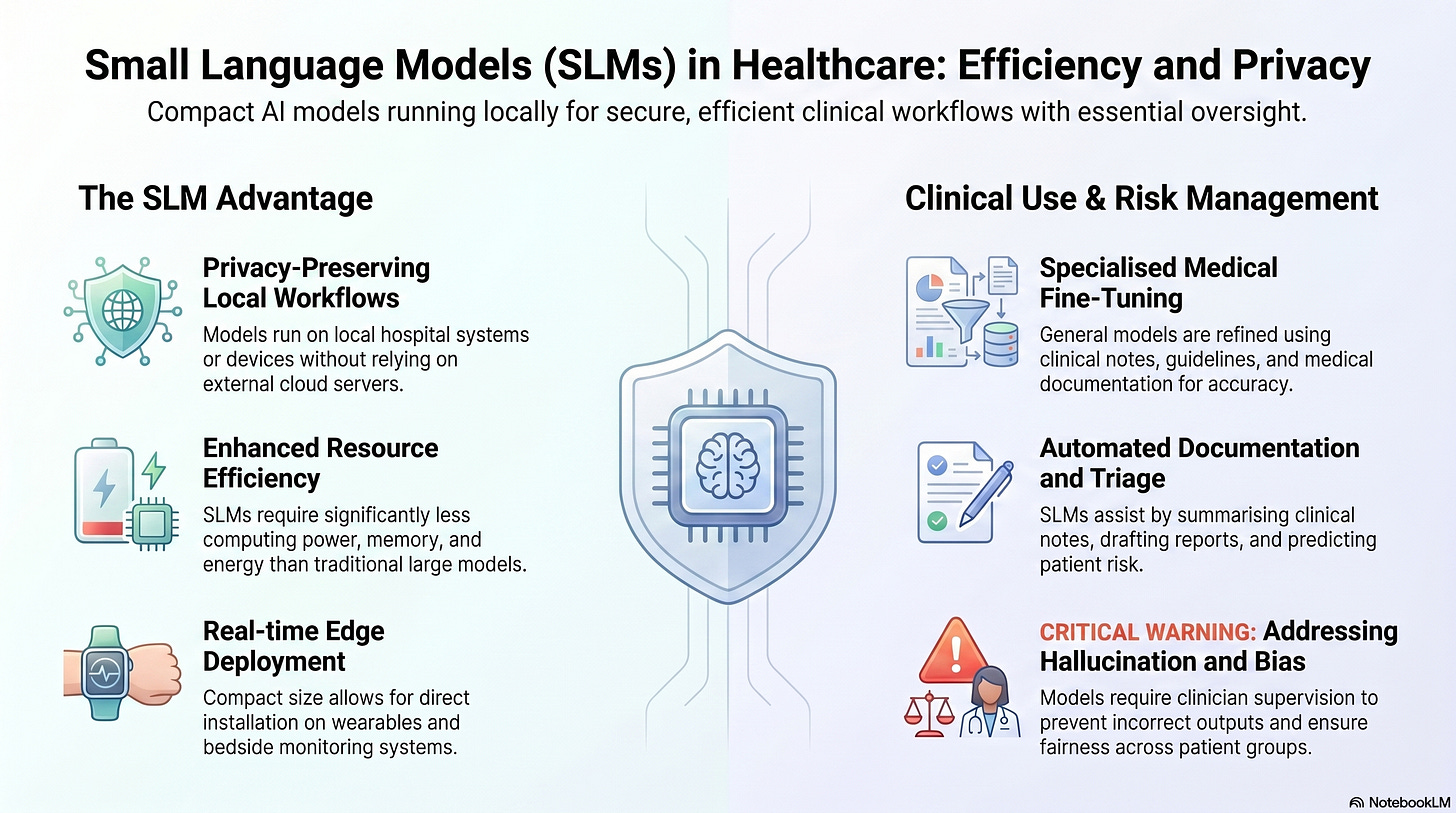

Healthcare is interested in SLMs because they can run locally without relying heavily on cloud servers, which may help support privacy-preserving workflows for sensitive patient data. They are also faster, cheaper to operate, and less energy-intensive than larger AI models, making them useful in resource-limited settings. Their smaller size allows deployment on edge devices such as wearables or bedside systems for real-time support.

Q3: How are SLMs prepared for medical tasks?

SLMs are usually adapted from existing general-purpose AI models and then trained further using medical data such as clinical notes, guidelines, or reports. Techniques like fine-tuning help them specialise in specific tasks, while knowledge distillation trains smaller models using outputs from larger “teacher” models. Other methods, such as pruning and quantization, reduce computing and memory requirements so the models can run efficiently on local devices. Unlike large internet-scale models, SLMs often rely on smaller, carefully curated datasets focused on specific domains. However, technical training alone does not make a model clinically reliable — proper validation, governance, and clinician oversight remain essential.

Q4: What clinical tasks are SLMs being explored for?

SLMs are being explored for tasks such as summarising clinical notes, drafting reports, correcting documentation errors, and assisting with triage or risk prediction. They are also being studied for wearable-based monitoring, patient interaction tools, mental health chatbots, and administrative automation. Because they can run efficiently on local devices, they may be particularly useful for real-time or edge-based healthcare applications.

Q5: What limitations and risks should clinicians understand?

SLMs can still generate incorrect or misleading information, sometimes presented confidently as factual output — a problem known as hallucination. Biases in training data may also affect fairness and reliability across different patient groups. In addition, SLMs usually perform best within narrow, well-defined tasks and may not generalise safely beyond them. For these reasons, they require careful testing, validation, and clinician supervision before being used in patient care.

Further Reading

Garg, Muskan, et al. The Rise of Small Language Models in Healthcare: A Comprehensive Survey. arXiv, 25 Apr. 2025

Sharma, R., and A. Patel. The Evolution of Small Language Models in Healthcare: A Narrative-Evolutionary Literature Review. British Journal of Multidisciplinary and Advanced Studies, 2025

Magnini, Matteo, Gianluca Aguzzi, and Sara Montagna. Open-Source Small Language Models for Personal Medical Assistant Chatbots. Intelligence-Based Medicine 11 (2025): 100197.

🔬 Research Picks

Generative artificial intelligence in forensic medicine: a pilot study on AI-simulated medico-legal reports in healthcare liability cases | Int J Legal Med.: Customized GPT-based models were able to generate structured medico-legal reports with argumentative coherence comparable to human experts in healthcare liability cases, particularly for clinical synthesis. However, the models showed poor agreement in permanent impairment estimation and sometimes produced fabricated or unverifiable references, highlighting the continued need for expert human oversight in medico-legal practice.

Impact of Artificial Intelligence-supported Triage Systems on Emergency Department Management: A Comparison of Infermedica, Emergency Severity Index, and Manchester Triage System | West J Emerg Med.: An AI-supported emergency department triage system was associated with lower in-ED mortality, fewer complications, better resource allocation, and higher patient satisfaction compared with the Emergency Severity Index and Manchester Triage System in a study of 18,000 patients. However, the findings came from a single-center study with short follow-up, potential biases, and limited control for confounders, meaning larger multicenter studies are still needed before broad clinical adoption.

Physician-Reported Safety Outcomes of AI-Generated Hospital Course Summaries | JAMA Netw Open.: An LLM-based workflow for generating hospital discharge summaries was frequently adopted by physicians, showed minimal reported safety risk, and was associated with significantly lower physician burnout despite only modest time savings. Most AI-generated summaries were rated as having no harm potential, although omissions and inaccuracies were common, and the single-center pilot design limits conclusions about long-term safety, generalizability, and real-world impact across broader clinical settings.

Forecasting hospital bed occupancy: a time series approach with prophet | BMC Med Inform Decis Mak.: A simple and interpretable time-series model, Prophet, accurately forecasted mid-term hospital bed occupancy with low error rates, while offering easier deployment, lower operational costs, and greater transparency than many complex AI models. The study also demonstrated a production-ready forecasting pipeline and dashboard system, although performance may still be affected by data quality, unexpected systemic changes, and simplified handling of events like the COVID-19 pandemic.

P.S. Each research pick title links to the original paper—do explore yourself for deeper insights, methodologies, and study limitations.

✨ Industry Spotlight

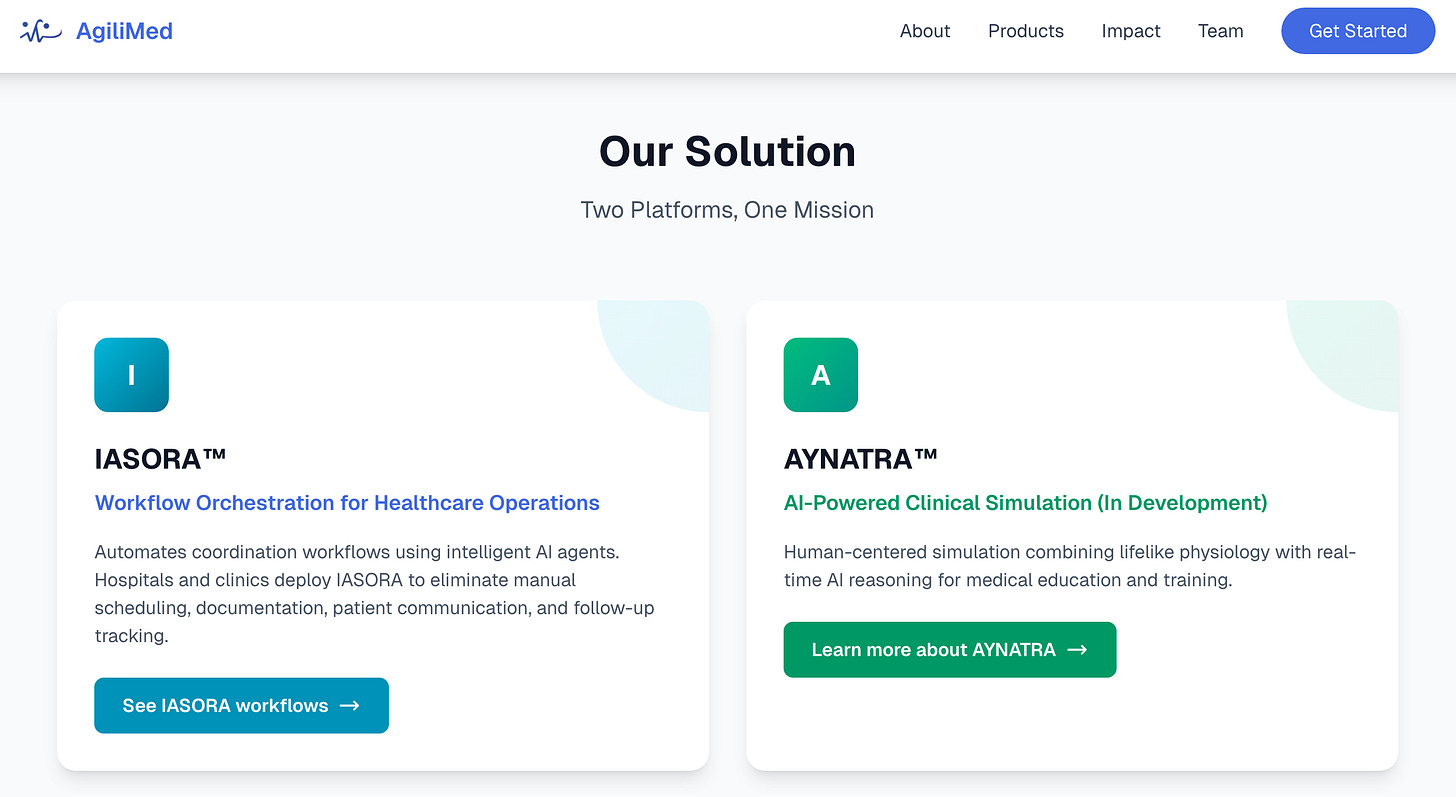

AgiliMed is a Melbourne-based healthtech company building IASORA, an AI-powered workflow orchestration platform for hospitals and clinics across APAC.

Rather than automating single tasks, it coordinates end-to-end processes — scheduling, documentation, patient communication, and follow-ups — using AI agents built on HL7 FHIR standards, with on-premise and air-gapped deployment options for data privacy compliance.

Their pitch is speed (2-4 weeks to go live), practical pricing across facility sizes, and a founding team with deep hands-on healthcare IT experience across Australia, India, and Southeast Asia. A clinical simulation product for medical education is also in development.

In short: workflow automation middleware for healthcare operations, positioned between point solutions and full enterprise EHR implementations.

*This ‘Industry Spotlight’ is editor-picked, not sponsored. Mention reflects interest, not endorsement.

📚 Learning Resources

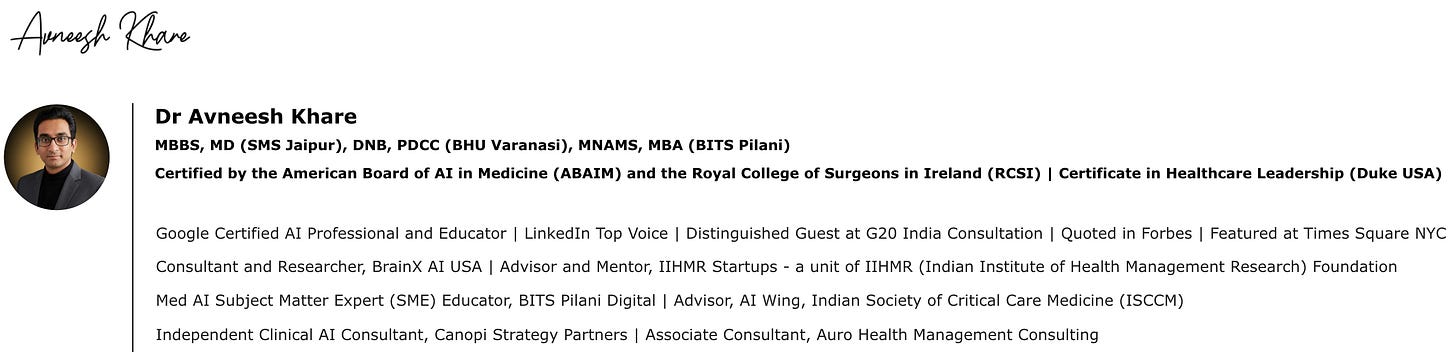

This video, AI in Healthcare: Module 15 (Algorithmic Fairness), features Dr. Avneesh Khare and moderator Mr. Rajiv Sharma discussing the crucial role of fairness when implementing AI in clinical settings.

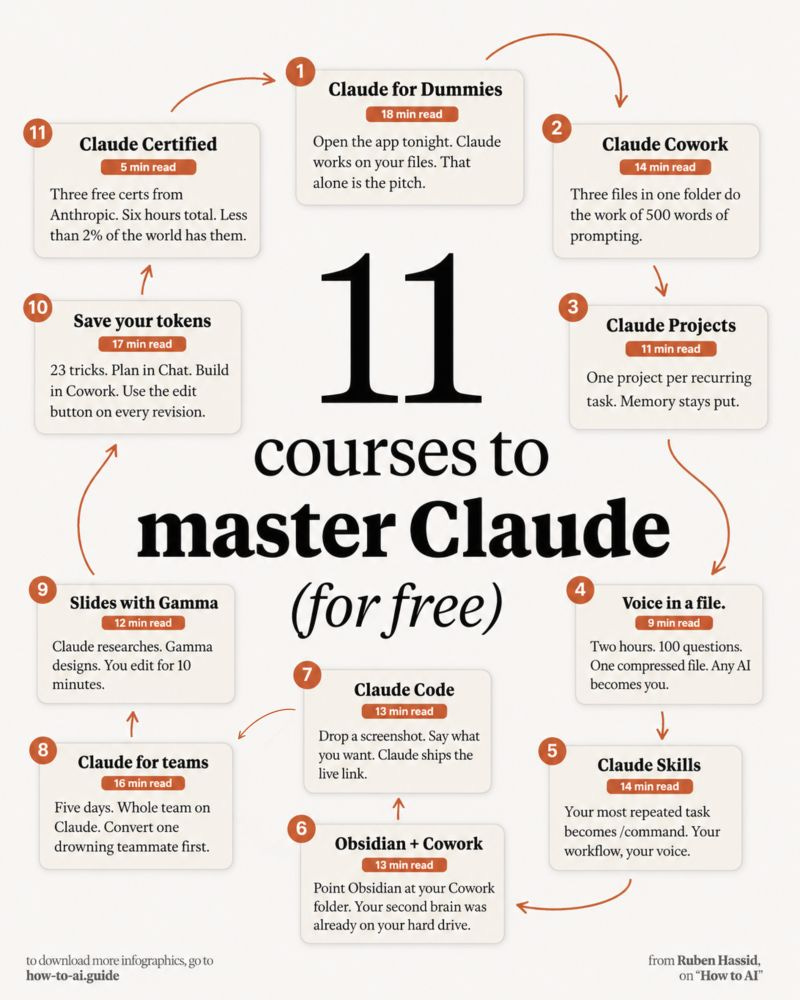

A curated list of practical, no-fluff AI learning resources (mostly around Claude) aimed at helping people build real skills without expensive courses.

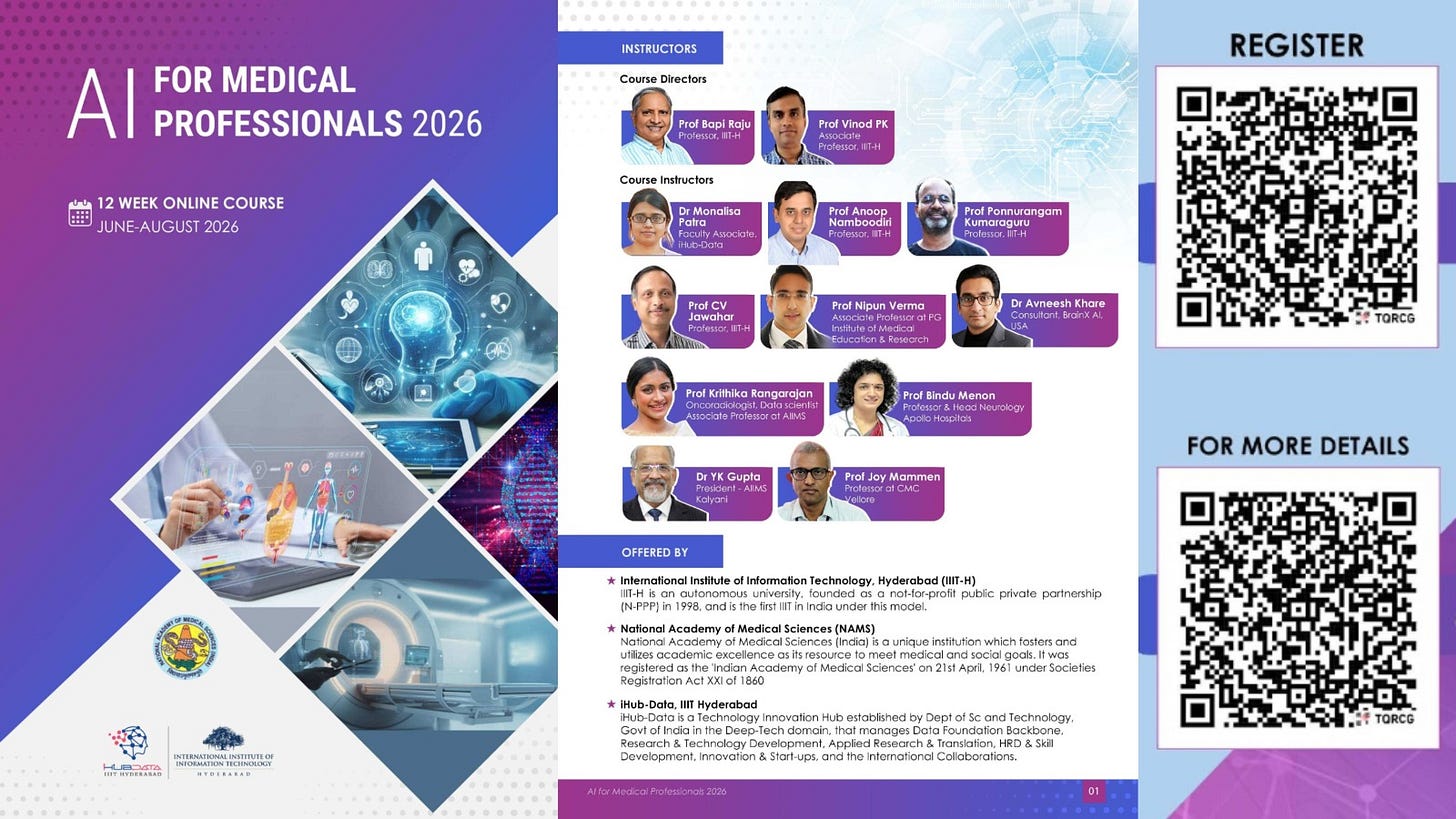

AI for Medical Professionals 2026: Online Certificate Course (3 Months) by IIIT Hyderabad and National Academy of Medical Sciences (NAMS), India.

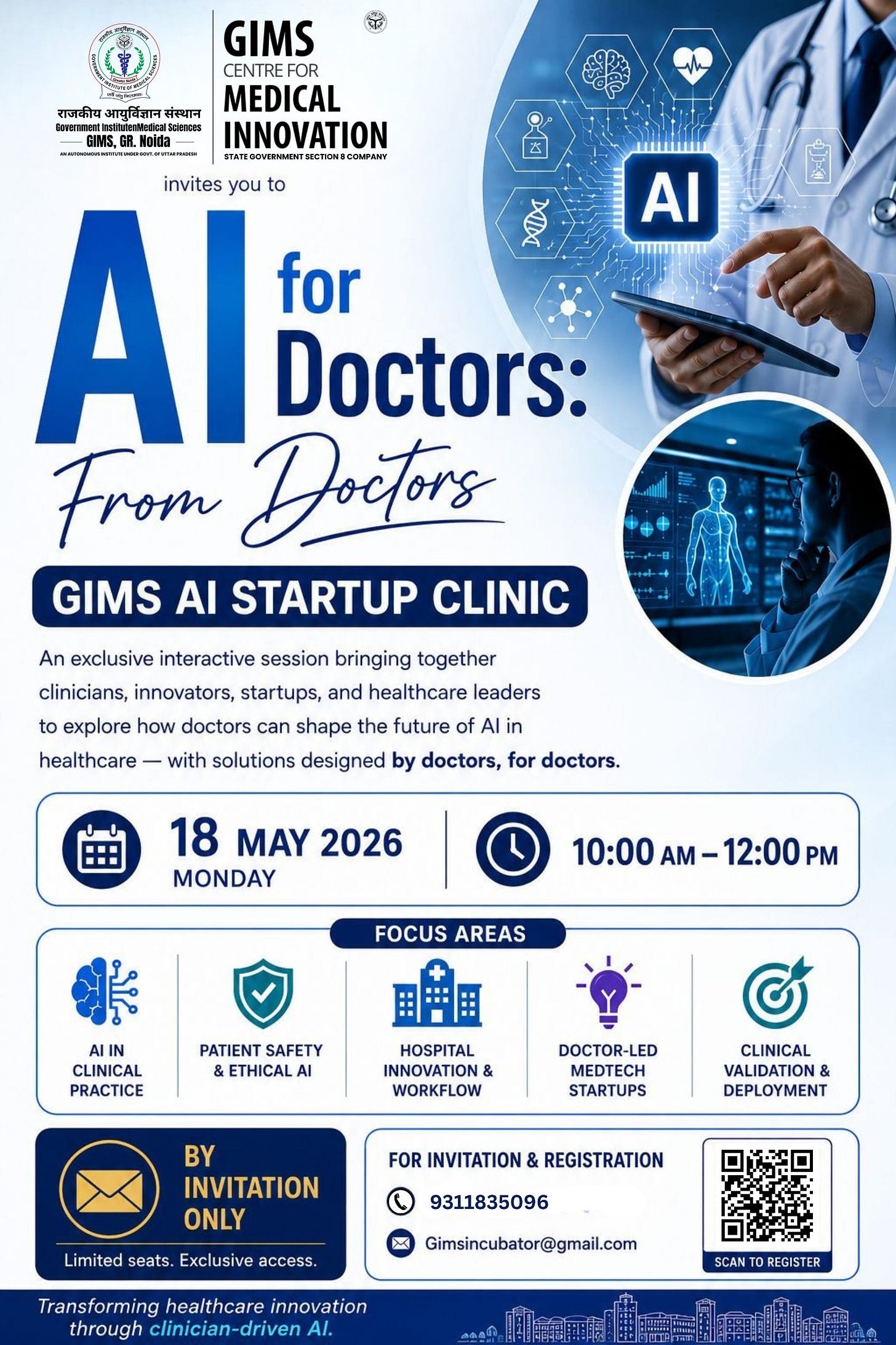

🧑💻 Worth-Attending Events

Your feedback is crucial to me, as it helps me understand your interests and improve my offerings. I would appreciate it if you could take a few minutes to share your thoughts about what you’ve enjoyed and what you think I could do better.

Let’s wrap it up with some latest news updates! 📰

OpenAI has launched a free AI assistant for U.S. clinicians that helps draft referrals, summarize research, streamline workflows, and support CME credits, with strong privacy safeguards and optional HIPAA compliance.

Utah’s medical board is urging suspension of a pilot where AI refills routine prescriptions, warning it risks patient safety despite state assurances of physician oversight and phased safeguards.

IndiaAI and ICMR have partnered to build an interoperable AI healthcare ecosystem by combining high-performance computing infrastructure with biomedical datasets to develop responsible, ethics-driven AI applications for public health challenges.

Stay tuned for the upcoming issues of my newsletter to explore the latest breakthroughs and dive deep into the transformative power of artificial intelligence, shaping a healthier future. 🚀

Disclaimer: The content in this newsletter was partly curated and summarized using AI LLMs, which can make mistakes. Please check all important information at your end. For any issues, please reach out at avneeshkhareonline@gmail.com.